You now also know how to split a tf.data.Dataset into a train and a test set. The examples in this Jupyter Notebook showed you how to properly combine TensorFlows Dataset API and Keras. You hopefully also noticed that having data in memory is faster than having the data being loaded by a generator.

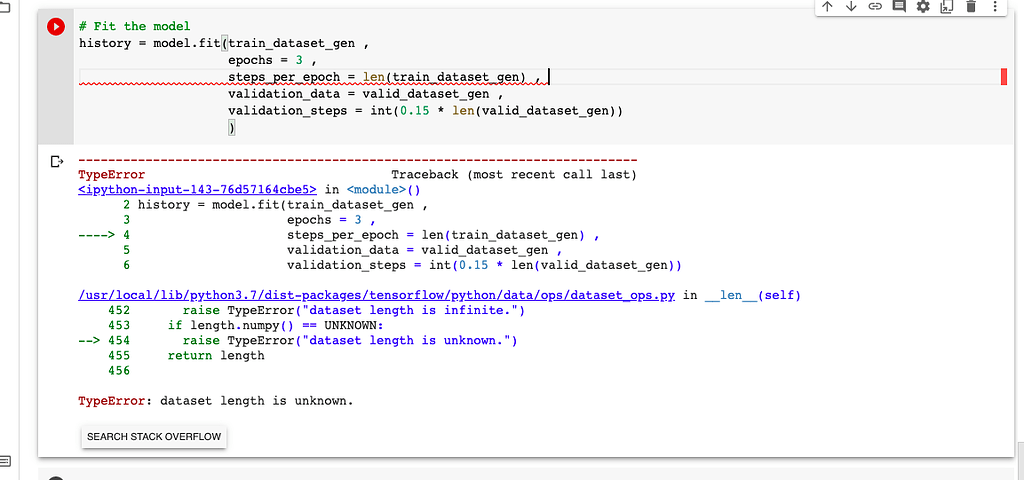

You now saw two examples on how to load data into the tf.data.Dataset format. Luckily there are methods, such as dataset.as_numpy_iterator which allow you to inspect and verify your data. It’s a bit hard to verify that your dataset is still working as expected. This requires a bit of Python puzzling to realize that you can pass the arguments to a function, which then creates an argument-free generator inside this function with the arguments you passed to the first function. When creating a generator you can’t pass anything to this generator. Last active 3 months ago Star 2 Fork 0 TensorFlow Dataset fromgenerator reading HDF5 Raw hdf5batchtest. Generator def generator (texttraintexts, labelYohtrain, batchsize1): label tf. of Tensor objects, or for writing a generator to load TF samples from it. Check our list of datasets to see if the dataset you want is already present. To get TensorFlow tensors instead, you can set the format of the dataset to tf. It has three components- A source, processing steps, and a destination. If you still have to load or adjust data it’s easy to create a generator which becomes a dataset.Īnother common error is TypeError: 'generator' must be callable. Overview Write your dataset Default template: tfds new Dataset example info: dataset metadata splitgenerators: downloads and splits data generateexamples: Example generator Follow this guide to create a new dataset (either in TFDS or in your own repository). Why do we need it A data pipeline is a process of taking your raw data and transforming it into the final product: a set of reports that let you analyze your data in real-time. Normally this number of batches should roughly cover the entire dataset.ġ875/1875 - 5s 2ms/step - loss: 2.3345 - accuracy: 0.8567 Make sure you specify this by setting steps_per_epoch to the number of batches you want to supply during training. Having your data in a dataset is also one of the fastest ways to load the data.Ī common error to get here is ValueError: When providing an infinite dataset, you must specify the number of steps to run. If your data fits in your memory it’s easy to turn your numpy array into a dataset. load_data () x_train, x_test = x_train / 255.0, x_test / 255.0 BATCH_SIZE = 32 STEPS_PER_EPOCH = len ( x_train ) // BATCH_SIZE # Note that // means full division without any remainder. ( x_train, y_train ), ( x_test, y_test ) = tf. Adam ( 0.001 ), metrics =, ) return model #load mnist data we will need in the entirety of this notebook compile ( loss = 'sparse_categorical_crossentropy', optimizer = tf. You always have to do that after quantizing your weights. Sequential () # Print the summary of the neural network _version_ ) def get_and_compile_model (): # Define a very simple model In this blog, we will build out the basic intuition of GANs through a concrete example. But when I have more images, it crashes due memory error. Model = tf.keras.Import tensorflow as tf import numpy as np import trics print ( "The TensorFlow version used in this tutorial is", tf. tensorflow dataset from generator Ask Question Asked 4 years, 3 months ago Modified 4 years, 3 months ago Viewed 2k times 0 I am using such code to recursively load images from directory and get associated labels - directory names. Shapes = ((,),ĭata = tf._generator(generator, Python Generators: def init(self, listIDs, labels, batchsize32, dim(32,32,32), nchannels1, nclasses10, shuffleTrue): Initialization self.dim. The following works in tensorflow 2.1.0 import tensorflow as tf Here's an example of a network with 3 inputs and 2 outputs, complete to the. I had a similar issue, and it took me many tries to get the structure right for those inputs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed